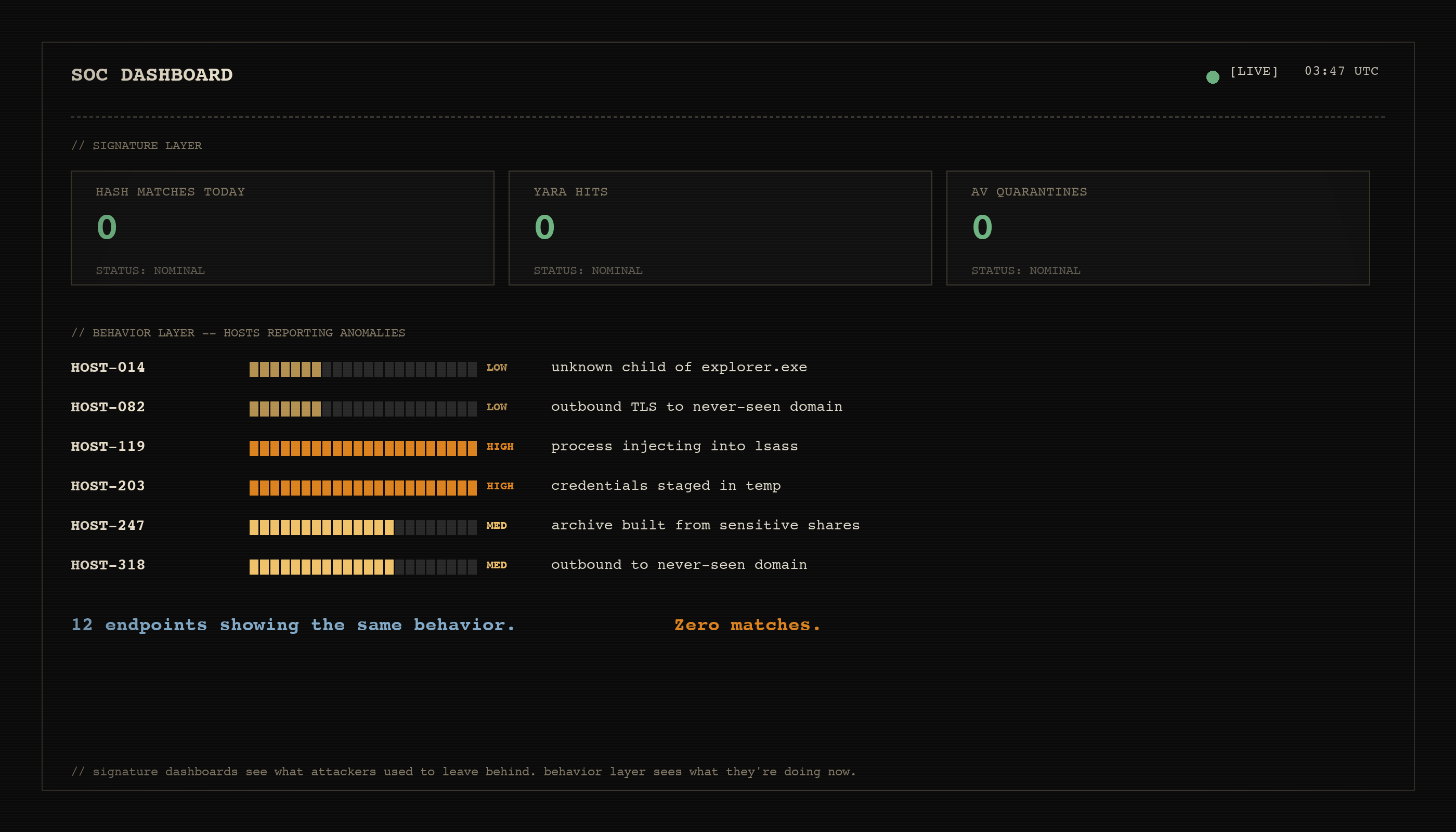

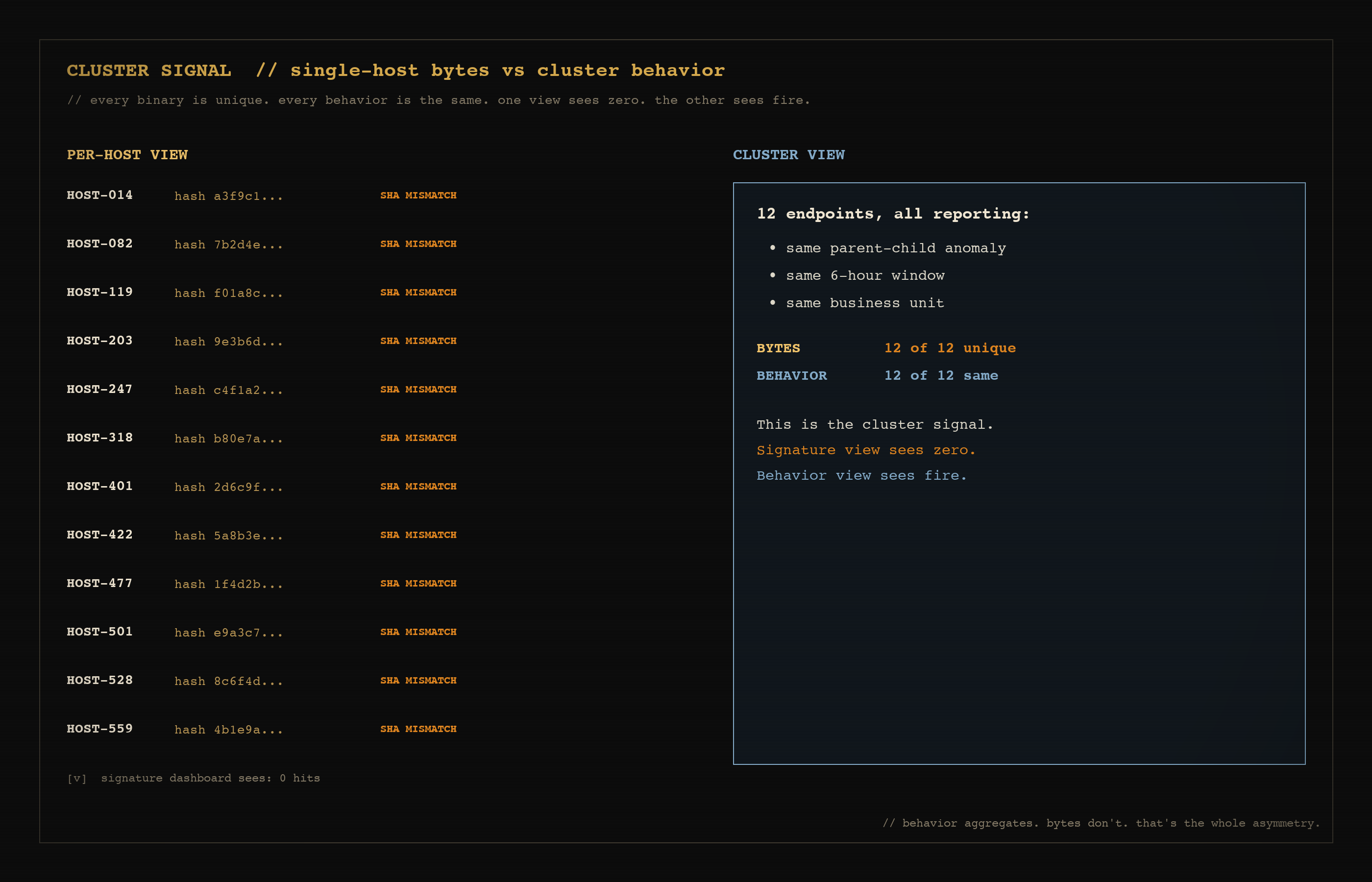

The defender has a hash list. The attacker has a model. The model regenerates the payload between each delivery, structurally identical, byte-level different, every single time. The hash list catches nothing. The defender's dashboard says zero hits. The endpoints are popping anyway.

That is the gap I want to talk about. It is not new. Polymorphic malware is older than I am as an operator, and thats pretty old. What is new is that it now takes a junior engineer with a $20 a month subscription, no specialized RE training, and an afternoon to do what used to take a serious team. I earned my GXPN and it was not easy, but I managed to wrap my mind around memory exploitation and I was able to do some very cool, very powerfull things.

I want to walk through three things. First, why detection got into this position. Second, what an attacker is actually doing right now, both with custom tooling and with one of the more interesting open source projects I have been watching, ghidra-mcp. Third, what I think actually works on the defensive side, including a piece of writing from Ty Anderson that lined up cleanly with how I have been thinking about this.

This is build in public in action. We have this on the research roadmap at my new start-up. The custom tooling exists. The combination with ghidra-mcp is on the bench. Treat what follows as field notes, this was a very interesting block of work that opened my eyes to the challenges that are ahead of us now.

Quick credential note up front: I am a GXPN #2327, one of the 'unicorns' out there that you hear about from time to time. The curriculum is built around exploit research, evasion, and the unforgiving end of penetration testing, so when I make claims about polymorphism, signature evasion, and the structure of payload-rewriting harnesses below, that is the lens I am drawing from. I am not analyzing this from the outside; this is the world I trained for and continue to operate in.

The detection model that is breaking

Most production detection assumes the same artifact shows up more than once.

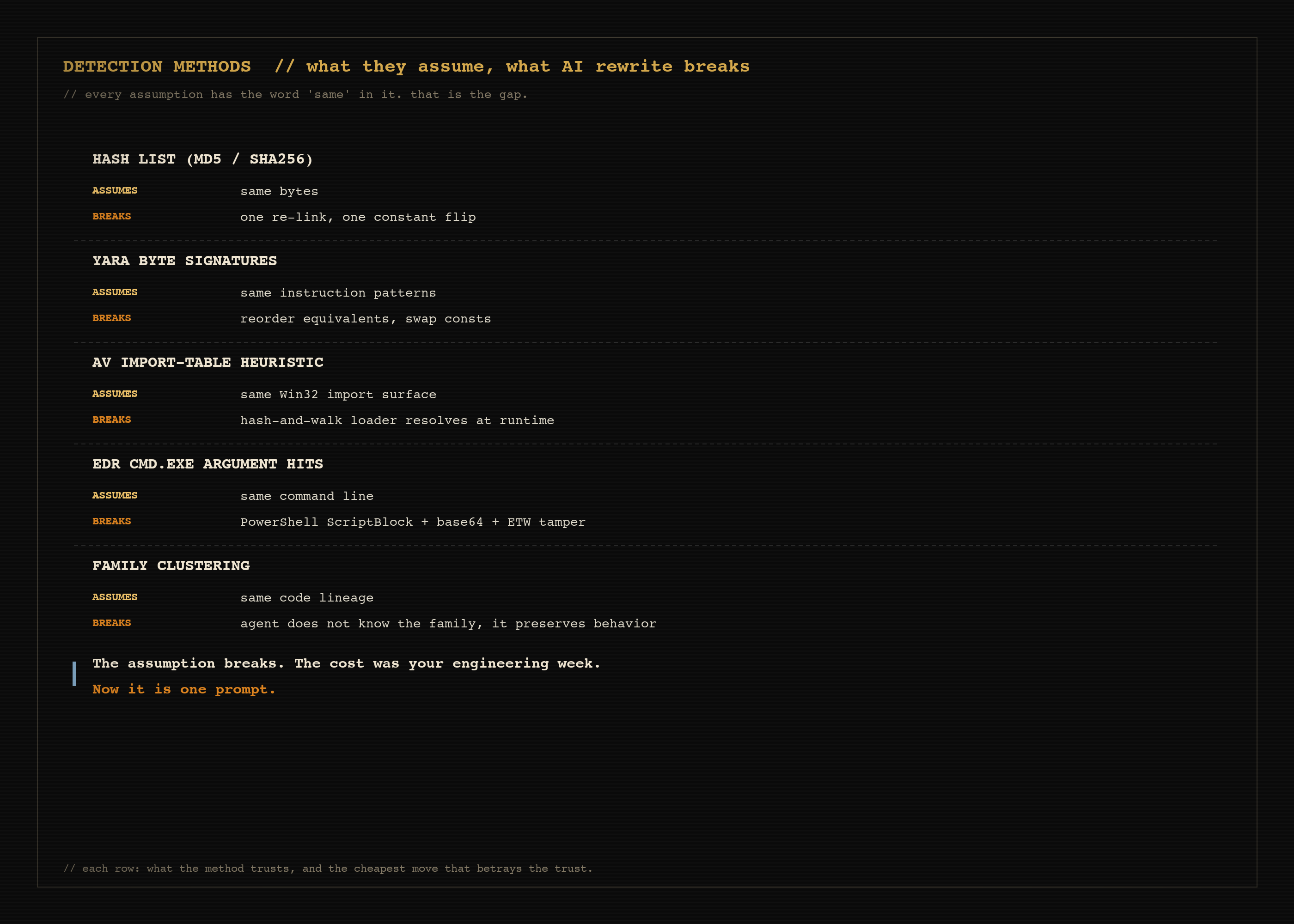

Hash lists assume a binary you saw on host A is the binary that lands on host B. Yara rules assume a string or a byte sequence you wrote a rule for will appear in the next sample. AV engines assume a signature trained on yesterday's family will catch today's variant if the family is stable enough. Endpoint signatures assume that a known-bad call sequence in a process tree shows up the same way each time.

Every one of those assumptions has the word "same" in it.

When the attacker can regenerate the payload before each delivery, on a per-target basis, the "same" never arrives. Hash lookup is the canonical example, but the rot goes deeper. Yara rules tied to specific instruction sequences get bypassed by reordering equivalent instructions. AV signatures keyed on the import table get bypassed by swapping the import resolution to a hash-and-walk loader. Endpoint string hits on cmd.exe arguments get bypassed by encoding the same logic as a script block in PowerShell, then base64, then an ETW-tampered child process.

The interesting question is not "do polymorphic techniques exist." They have existed for decades. The interesting question is the cost. The cost was high enough that polymorphism was a specialty discipline, owned by a handful of teams, with engineering effort proportional to the result. That cost has collapsed and now any team can start experimenting with creating their own snowstorm of exploits.

What the attacker is actually doing now, with custom tooling

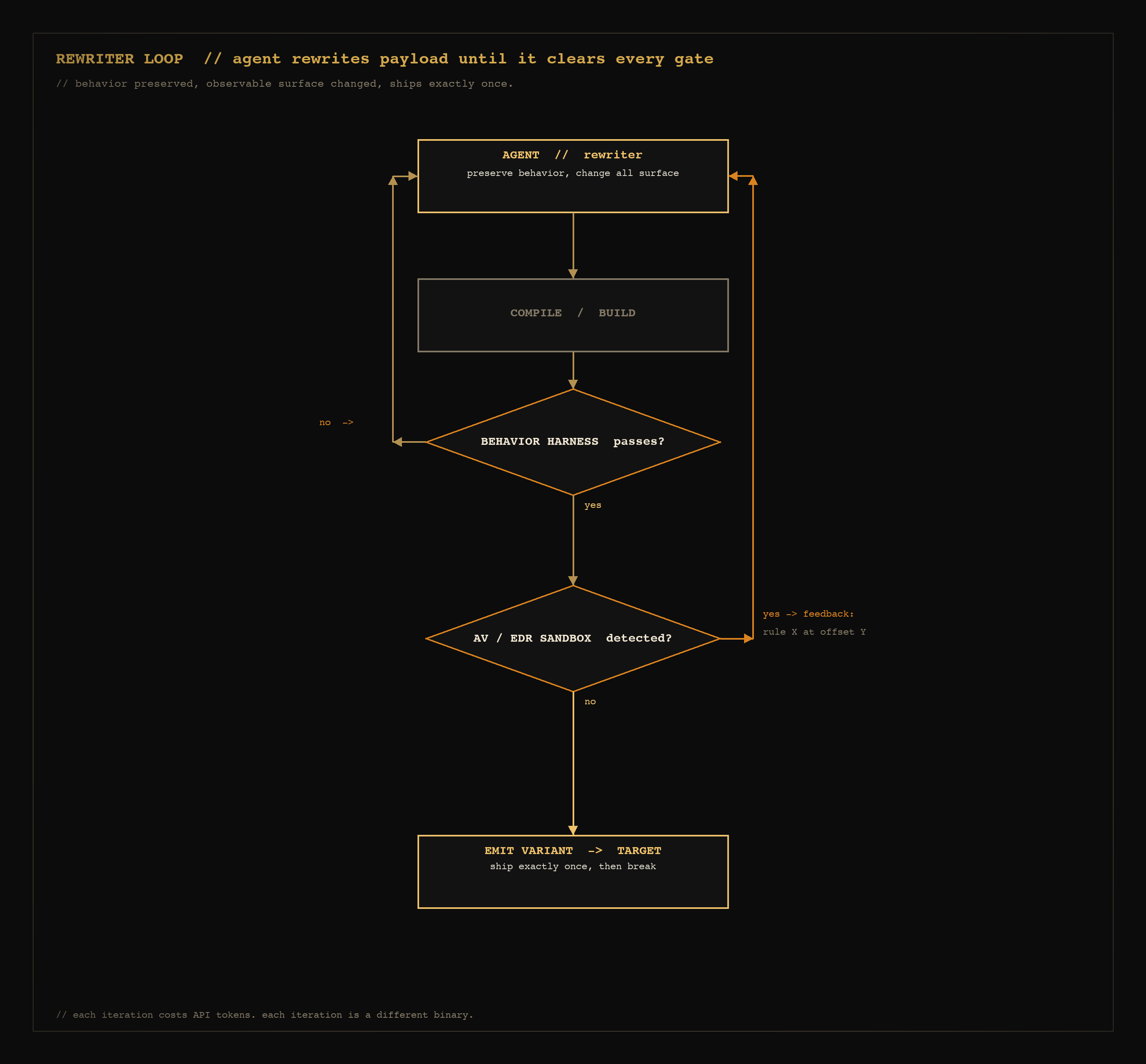

The most basic version of this is a loop. Take a working payload. Hand it to a coding agent. Tell it the goal, give it a sandbox, let it iterate.

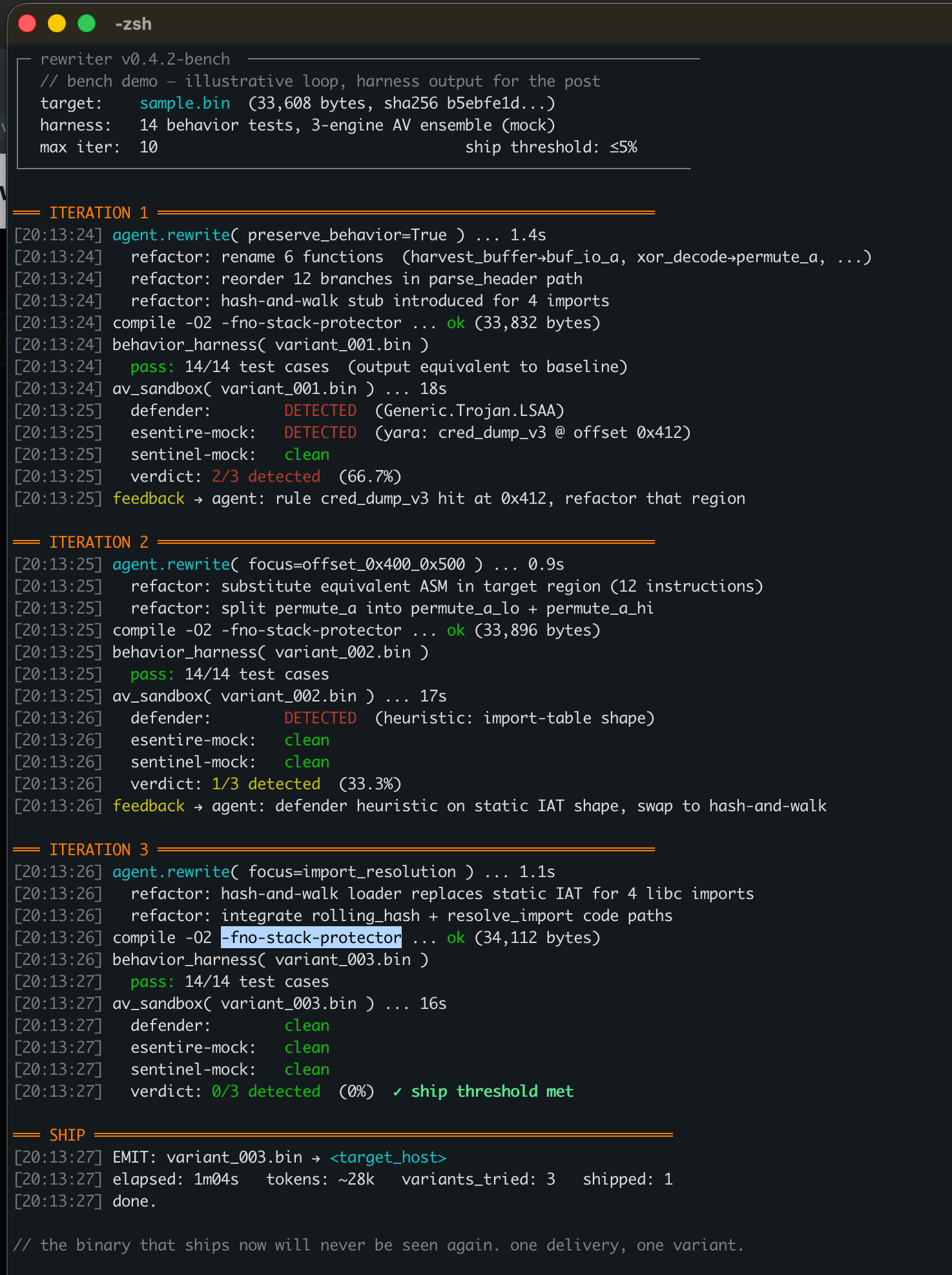

Pseudocode of a per-delivery rewriter:

input: payload.c, behavior_test_case

loop:

variant = agent.rewrite(payload.c, "preserve behavior, change all observable surface")

built = compile(variant)

if not behavior_test_case.passes(built):

continue

if av_sandbox.detects(built):

agent.feedback("rejected: rule X matched at offset Y, refactor")

continue

emit(built, target=current_target)

break

Three iterations against a benign demo binary on the bench. Variant 1 hits a yara rule at offset 0x412, agent gets the feedback, refactors. Variant 2 trips a Defender heuristic on import-table shape, agent swaps to a hash-and-walk loader. Variant 3 clears every gate. Ship. The whole loop ran in ~1m04s and ~28k tokens. Output is from the bench harness, labeled illustrative.

The expensive piece historically was the AV feedback channel. You needed your own sandbox, you needed signature parity with what production EDR was running, and you needed the payload-aware test harness so the agent did not break the actual logic during refactoring. Each of those has gotten cheaper or costs nothing at all now.

Behavior preservation is the part that surprises people. A junior agent, given the right scaffolding, will refactor a credential dumper into a syntactically alien version of itself in three iterations. It will rename functions, reorder branches, swap equivalent instructions, re-derive constants, change calling conventions, and verify the result still produces the same output against a test corpus. The agent does not "understand" the malware. It does not need to. It is doing the same kind of refactoring it would do for a benign codebase, with a different prompt and a different validation harness.

This is what I mean when I say the cost collapsed. The engineering work is no longer in the polymorphism, which is now a commodity capability. The engineering work is in the harness, the test corpus, and the AV-feedback integration. The payload itself is the easy part and if you can engineer the pipeline to create them reliably, this is practically 'push button' easy now.

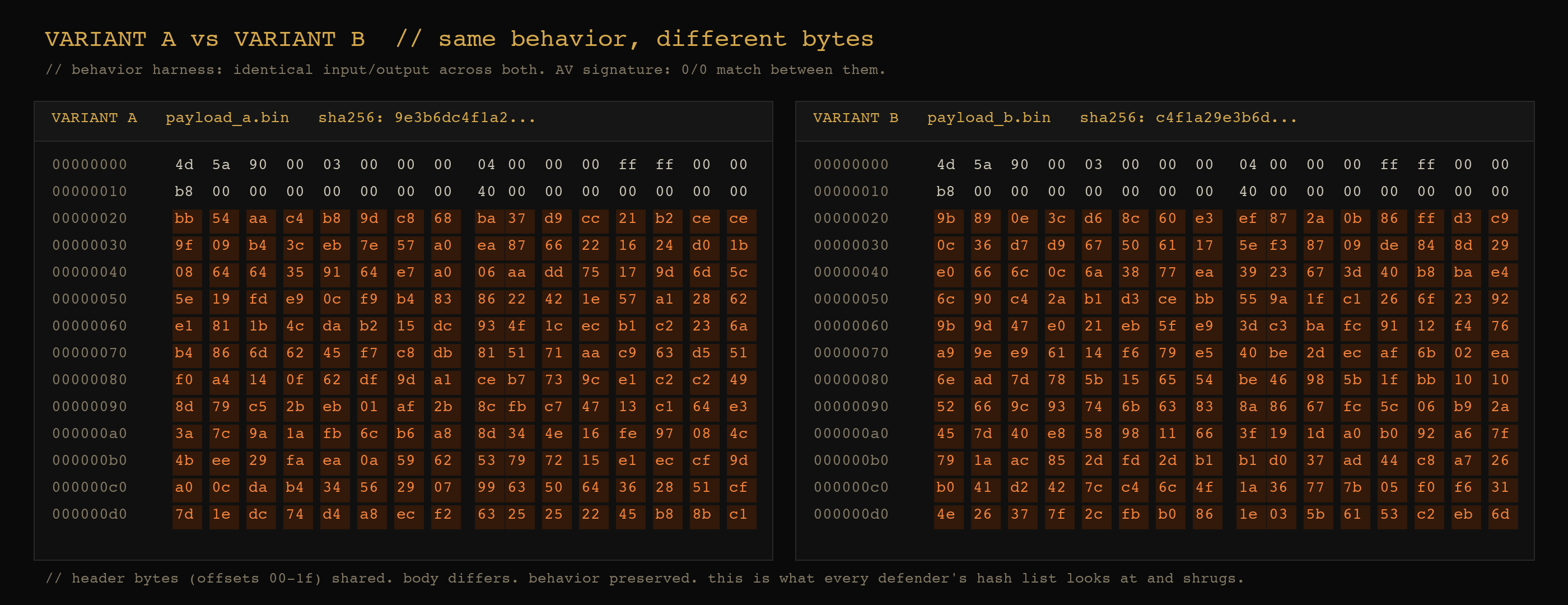

Same behavior, different bytes. Headers shared. Body bytes differ everywhere. Both binaries pass the same behavior test. Both have unique SHA256s. This is what every defender's hash list looks at and shrugs.

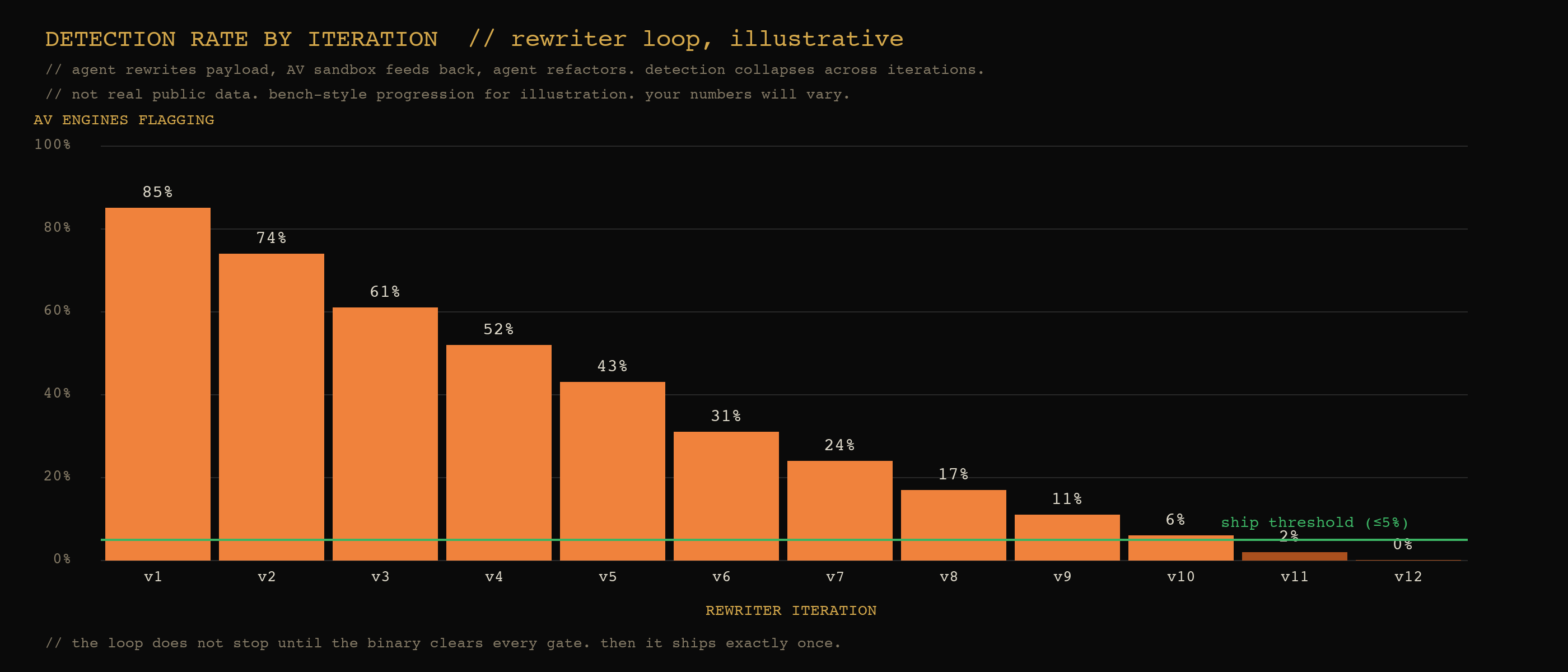

Illustrative bench progression. The rewriter loop runs until the binary clears every gate, then it ships exactly once. Numbers are example, not real public data, your harness will produce its own curve.

What ghidra-mcp adds

The custom-tooling path I described above operates on source. There is a separate path that operates on the binary directly. That path used to require Ghidra, IDA, or Binary Ninja, and a human reverse engineer.

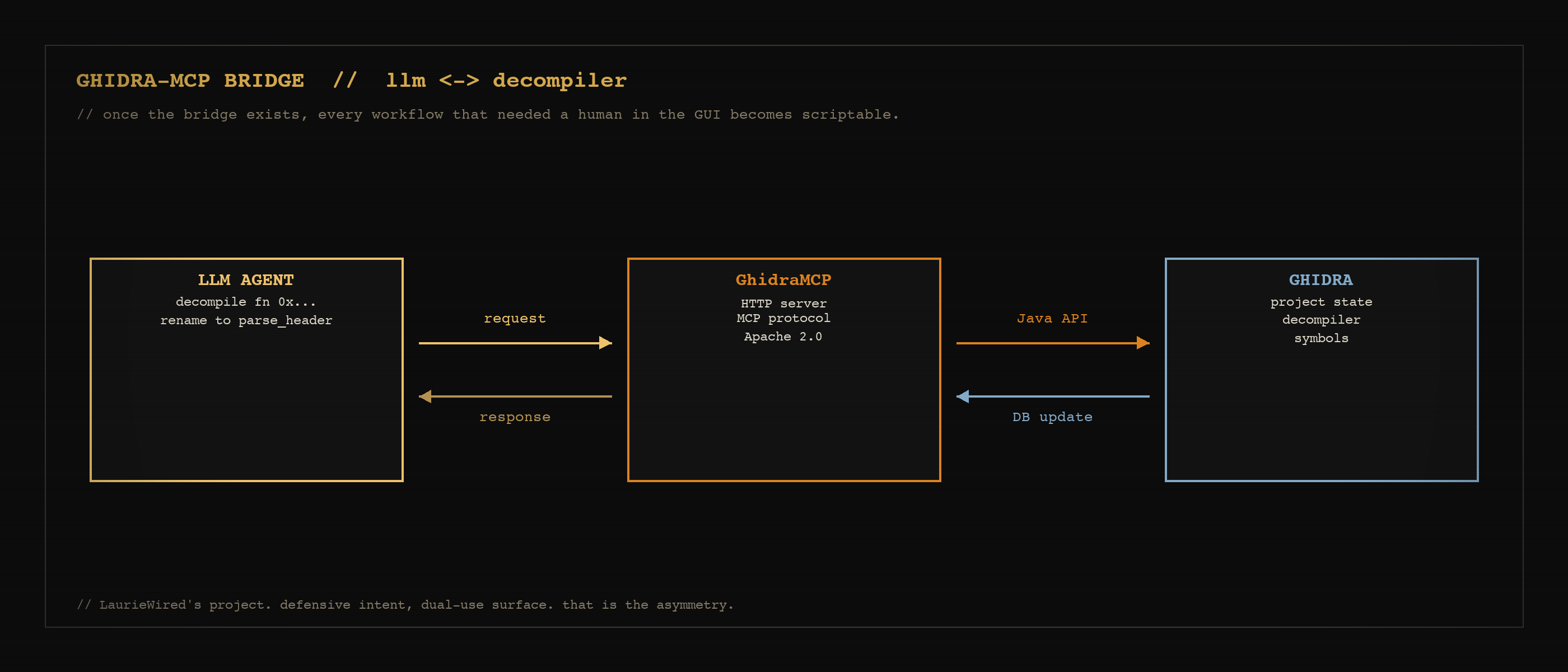

The bridge IS the product. Once an LLM can read Ghidra's decompiler output and write back into Ghidra's project state, every workflow that used to require a human in front of the GUI becomes scriptable.

GhidraMCP puts the core Ghidra reverse-engineering primitives behind a Model Context Protocol server. Author is LaurieWired, Apache 2.0, version 1.4 at the time of writing. The exposed surface is intentionally lean. The capabilities that matter for this conversation:

- Decompile and analyze binaries — an agent can pull pseudocode for any function in the program and reason about it directly

- Rename methods and data — agent-applied names persist in the Ghidra project, which means the agent can build a coherent mental model of the binary across many turns

- List methods, classes, imports, and exports — symbol enumeration, the entry points an attacker would scan for signature surface

That is a small tool list. It is also the right one. Forks of GhidraMCP have expanded the surface to hundreds of tools (cross-binary hash propagation, P-code emulation, live debugger integration over TraceRmi, batch operations across functions). The point of LaurieWired's original is the bridge itself: AI agent on one side, Ghidra's decompiler and project state on the other. Once that bridge exists, everything downstream is engineering.

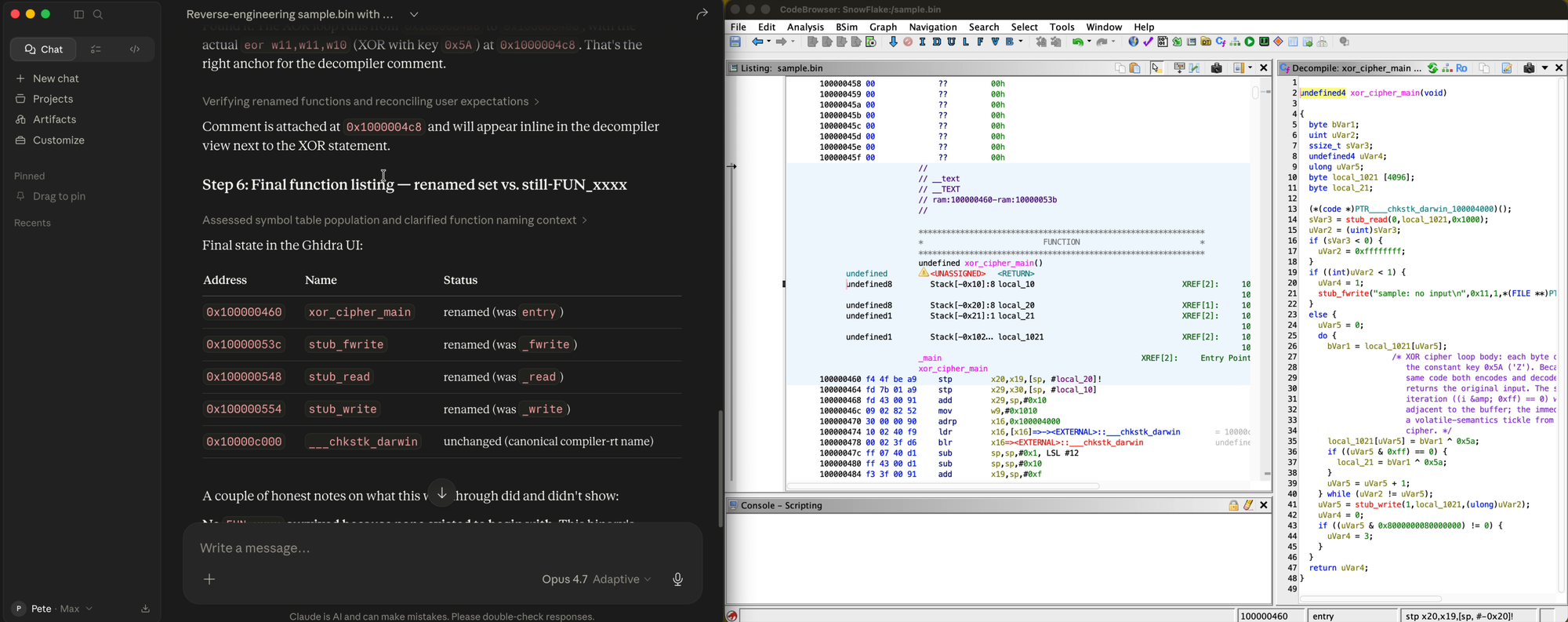

Captured live from a Claude Desktop session driving Ghidra via GhidraMCP. Left: agent's final summary table: xor_cipher_main (renamed from entry), plus stub functions stub_ferrite, stub_read, stub_write, and __chkstk_darwin recovered as canonical compiler stubs. Right: Ghidra decompiler showing the pseudocode of the renamed entry function with cross-references to the other agent-renamed targets. The whole walkthrough (list, decompile, rename, comment, re-list) was driven over the MCP bridge by the LLM, with each tool call landing visibly in the Ghidra UI before the next one fired.

Run that bridge in your head from an attacker's chair. An agent can:

- Take a binary it owns

- Decompile every function via the MCP bridge

- Identify the parts that carry the signature surface (the parts a Yara rule would key on)

- Plan a structural rewrite to propose new function bodies, new control flow, new constants by reasoning over the decompiled output

- Apply the rewrite via Ghidra scripting, source-level patches, or a downstream compile chain

- Cross-check the rebuilt binary against a list of known signature rules to confirm the rewrite landed

- Iterate until the binary clears the rules and behavior tests still pass

The intent of the project, very clearly stated, is defensive automation: let LLMs do the slow parts of reverse engineering so analysts can move faster. That is a good intent. The point I want to make is not about the project; it is about what becomes possible the moment the bridge exists.

Defensive intent does not change the dual use. The same tool surface that lets a defender automate reverse engineering of a captured sample lets an attacker automate the structural rewrite of the next one before it ships. This is not a critique of the project. It is a recognition that the asymmetry between attack and defense in this space tilts toward the side with more iterations per hour, and the tool layer just gave both sides more iterations per hour.

Why "more signatures" is the wrong response

The instinct, when this lands in front of a SOC, is to throw more signatures at it. More Yara, more Sigma, more fingerprint families, broader patterns.

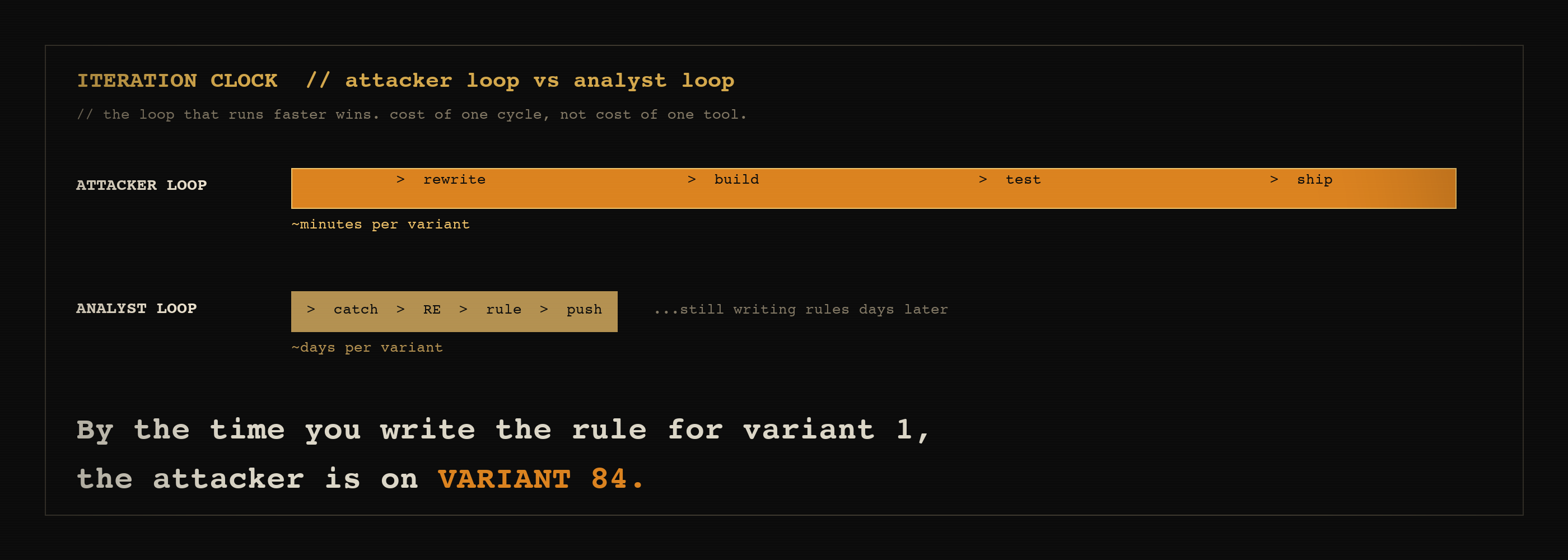

This does not work in the limit. The attacker's iteration loop is faster than the analyst's signature loop. Each new family of payloads can be regenerated infinitely until it stops matching whatever the analyst wrote. The signature shop is now the bottleneck of its own defense, and the attacker is paying with API tokens, not engineer-weeks like we used to.

Worse, broader patterns degrade precision. The analyst, pressured to keep up, writes wider rules. False-positive rates climb. The SOC starts ignoring the noise. The attack lands inside the noise and nobody triages it.

The correct response is not "write faster." It is "stop writing signatures for things that are designed to never repeat."

What actually works, in the order that matters

A short version, opinionated.

Behavior over bytes. Detection moves up the stack. What does this thing do, not what does it look like. Process tree, parent-child relationships, syscall patterns, network behavior over time. These are harder to refactor away because the behavior is the point of the payload. If the payload no longer does the thing, the attacker has not won.

TTP-level rules with negative space. Sigma-style rules tied to attacker techniques rather than artifact bytes. The MITRE catalogue is a usable substrate but the real value is in negative space rules: this combination of behaviors, in this order, never occurs in benign software. Negative-space rules survive byte-level rewrites because they describe what is absent in the legitimate world, not what is present in the malicious one.

Chain mapping. Ty Anderson wrote a piece on using AI agents to find and fix credential bugs in a Docker-based Artifactory lab. The thesis is not "find more bugs." The thesis is map the chain: which credential led to which repo led to which credential led to which privilege escalation. Then ask the question that matters, which is "the single remediation that, if applied, would have limited the blast radius the most."

That framing is exactly the framing red teams use offensively. We do not value individual vulns. We value chains. The defender's job is to apply the same lens. Not "how many findings did we close" but "which finding broke which chain."

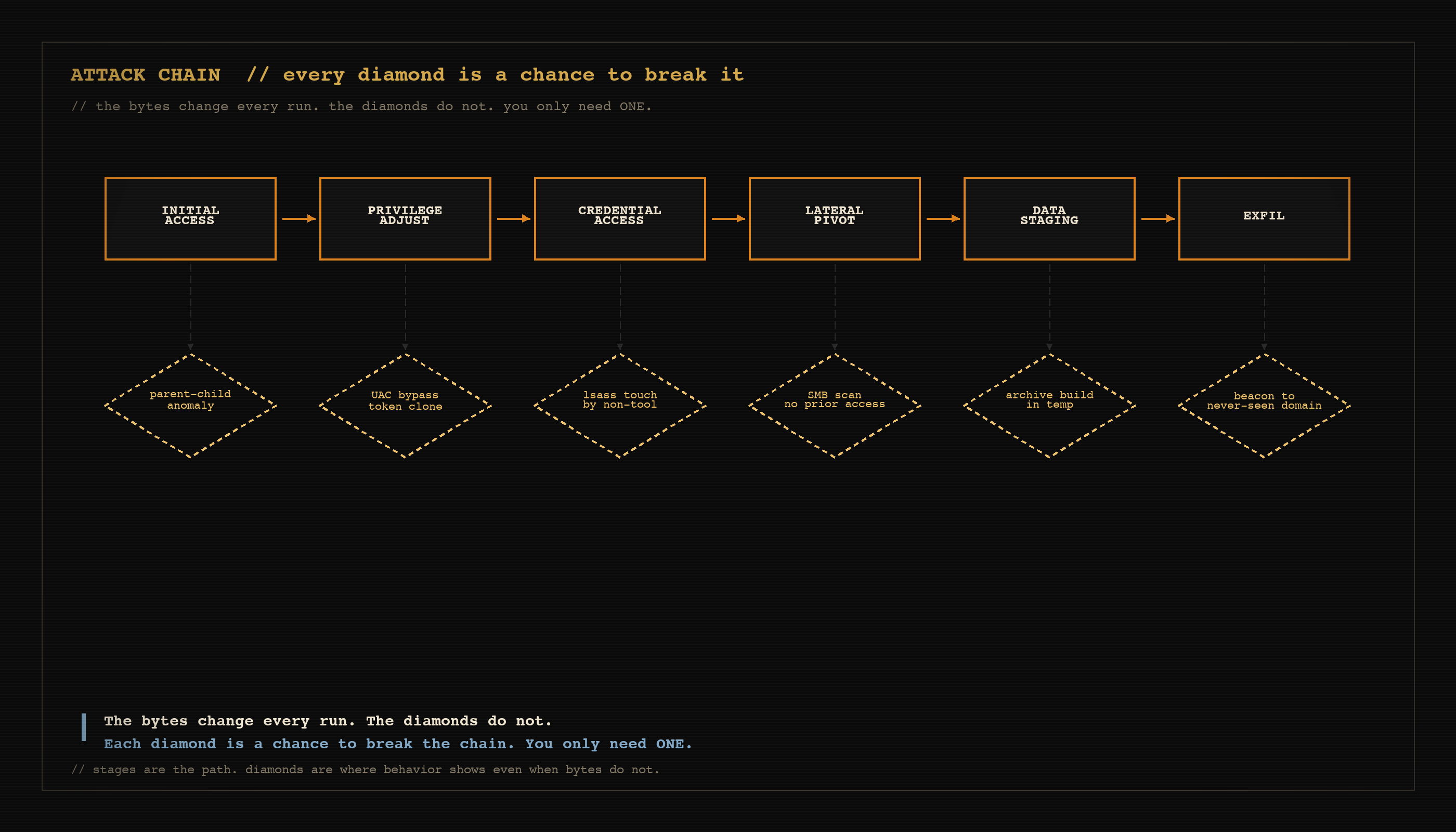

Anderson is writing about secrets specifically. The pattern generalizes. In a payload-detection context, the chain looks like this:

The bytes change every run. The diamonds do not. Each diamond is a chance to break the chain. You only need ONE.

A signature-evading payload that completes that chain has many points where the behavior is detectable even when the bytes are not. The defender's question becomes: which detection point, if hardened, would have broken the chain earliest? That is a different question than "did we have a signature for it."

Cross-host correlation. The single host signal is dilute. The cluster signal is loud. If twelve endpoints in the same business unit emit the same anomalous parent-child relationship in the same six-hour window, the bytes do not have to match across them. The behavior already does. This is where the "single use" weakness collapses for the attacker: each payload is unique on disk, but the things they do are the same, and behavior aggregates.

Sandboxing the unknown by default. If the EDR cannot prove a binary's lineage in real time, the binary runs in a constrained context until lineage is established. This is unpopular because it costs in user experience. It is also one of the few moves that survives polymorphism because it does not depend on recognizing the binary at all.

Reverse-engineer from the other side. Defensive teams should be using ghidra-mcp and equivalents. Not for detection in production but to keep up with the attacker's iteration speed during analysis. If captured samples take two weeks to characterize and the family rotates every three days, the analysis pipeline is broken. AI-accelerated RE is one way to compress that gap. We are building toward this internally.

Where we are with this research

We have the custom-tooling path on the bench. The harness exists, the rewriter exists, the AV-feedback integration is the work in progress. We are running it against our own captured samples, internally, against eval suites we control. The point is not to ship offensive tooling; the point is to know exactly what the curve looks like so we can build defenders that survive it.

ghidra-mcp goes on the bench next. Same logic: not to ship anything offensive, but to understand the iteration speed advantage on the analysis side and figure out how much of it generalizes to behavior-level detection rather than signature-level.

The Anderson chain-mapping pattern is going into how we think about evaluating defenses. Not "did we have a rule" but "did our defenses break the chain at any point." We want to score defensive postures by chain-break rate, not by signature count.

If you are a defender, the takeaway I want to leave you with is this: the asymmetry is real and it has shifted, but the move to behavior-and-chain-aware detection is one your team can start tonight. You do not need new vendor budget. You need a TTP catalogue, a chain-mapping discipline, and someone willing to retire the rules that were never going to survive a polymorphism budget anyway.

If you are an attacker reading this for the offensive value, the lesson is the same one we have always known on red teams. The byte-level surface is the easy part. The chain is what wins. AI just made the byte-level surface cheaper, not different. The defenders who are paying attention know it now too.

Credits and references

- GhidraMCP — Apache 2.0, by LaurieWired. Version 1.4 (June 2025) at the time of writing. The MCP bridge that connects an LLM agent directly to Ghidra's decompiler and project state. There are forks that expand the tool surface significantly; the project that started this category is LaurieWired's.

- Ty Anderson — How to Find and Fix Bugs Using AI Agents, Medium. The chain-mapping framing in the mitigation section is built on his article's thesis about blast-radius-aware remediation.

- Ghidra — the underlying reverse engineering platform that ghidra-mcp wraps. NSA-released, Apache 2.0. ghidra-sre.org

- MITRE ATT&CK — the TTP catalogue referenced in the mitigation section. attack.mitre.org

If you build something on top of any of the patterns in this post, the work below is what made it possible. Credit the people who did the heavy lifting.

Pete McKernan (McKernel) is a hot air balloon officiando and master offensive security operator. Past stops: USAFRICOM red/blue/purple team, Red Team at Quantico, the Magician of SpecterOps. Currently builds things with the Cipher Circle at [REDACTED]. Holds GXPN, COAE, GPEN, CISSP. Currently #1 on the Hack The Box Federal Leaderboard.