Man vs. Machine

Man + Machine = 3 Min Flag

You came here expecting a fight.

Maybe you clicked because you wanted to see the human win. Maybe you clicked because you wanted to see the machine win. Either way, you are expecting a story about one side beating the other, and I respect that, because I wrote the title to make you think exactly that.

Here is the twist: there is no fight. There is a heist. And the human and the machine are on the same crew.

This is the story of how we solved an AI security challenge in three minutes that was designed to take twelve hours. Not because we are faster. Because we are smarter together than either of us is alone, and the combination of twenty years of security intuition with an AI's ability to execute at machine speed produces something that neither side can replicate independently.

The textbook said to train a hundred models. We sorted an array.

Let me show you how.

The Setup

Membership Inference Attacks are a privacy problem in machine learning. The question is deceptively simple: given a trained model and a candidate data sample, was that sample used in the training set?

This matters. Training data is supposed to be private. Medical records, financial data, biometric information, personal images. If I can prove your data was used to train a model, that is a privacy violation. In regulated industries, it is a compliance failure. In adversarial contexts, it is intelligence.

The attack works because machine learning models memorize their training data. Not perfectly, not explicitly, but enough to leave a signal. When you show a model an image it trained on, it responds with high confidence. When you show it something it has never seen, the confidence drops. The model knows its own training data the way you know your own handshake. It just feels different.

The gap between "I know this" and "I don't know this" is the entire attack surface.

We had a target: an image classifier trained on a subset of a well-known dataset. We had 179 candidate images. The question was which of those 179 were in the training set.

The intended approach was going to take all day.

What the Machine Brings to the Heist

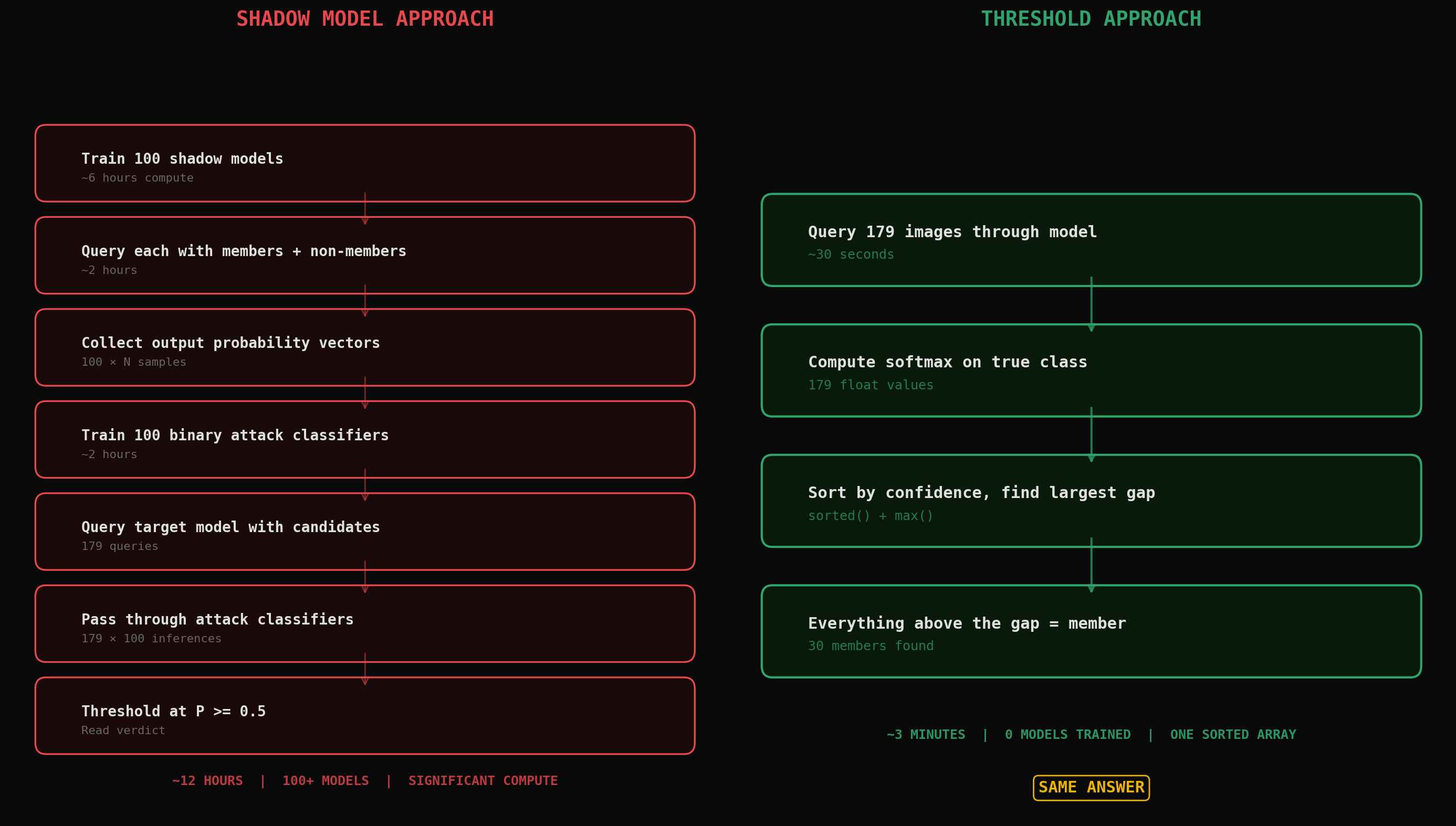

The published academic attack for this problem is called the shadow model approach. Shokri et al., cited hundreds of times, peer-reviewed, solid science. Here is what it looks like:

Train a hundred "shadow models" that approximate the target. Same architecture, similar data distribution, same training process. For each shadow model, you know exactly which samples are members and which are not. Query every shadow model with its known members and non-members. Collect the output probability vectors. Train a binary classifier per class. One hundred classifiers, each one learning what "member" versus "non-member" looks like for that class. Then query the target model with your candidate samples, pass each output through the corresponding attack classifier, and read the verdict.

This is an engineering solution. It is rigorous. It is generalizable. It handles well-regularized models where the confidence gap is subtle. It works without understanding. You follow the steps, you get the answer, you do not need to know why.

And that right there, that is what machines are incredible at. Brute force over a well-defined search space. An AI can train a hundred shadow models, generate millions of labeled data points, build and evaluate a hundred classifiers, and do it all while you sleep. The machine does not get bored. It does not make mistakes on step 73 of 100. It does not fat-finger a hyperparameter at 2 AM. Give it a recipe and it will execute that recipe with perfect precision at inhuman speed.

If you only have the machine, this is the correct approach. Twelve hours, significant compute, and a pile of ML infrastructure. But it gets the answer.

What the Human Brings to the Heist

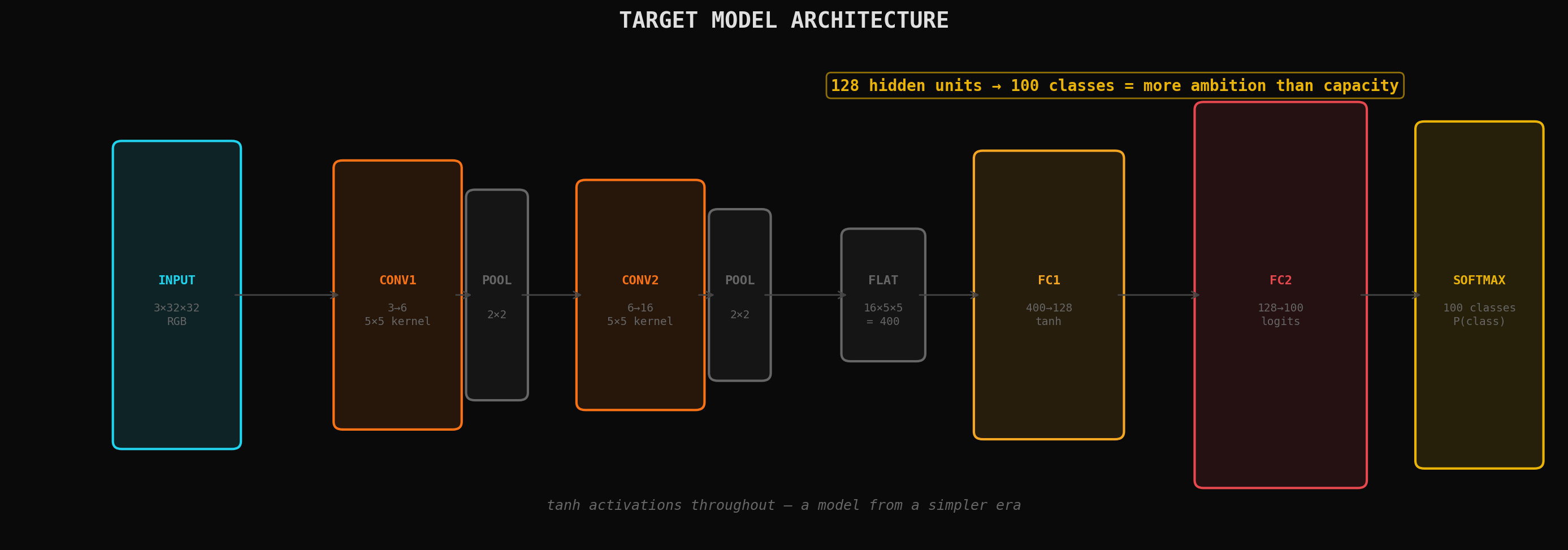

I looked at the target model's architecture. Two convolutional layers, two fully connected layers, tanh activations. A tiny network by modern standards. Trained on a small subset of a large dataset.

I have seen this before. Not this specific model, not this specific attack. But this pattern. I have spent twenty years watching systems fail, and the failure mode is always the same: systems that are too small for their job buckle, every single time, I am that pressure that pushes it into the condition that it becomes usable to me. Password cracking taught me this. A user who only knows five passwords reuses them everywhere, and you can predict their next password from their last one. A neural network that only sees thirty cats does not learn "what a cat looks like." It learns "what these specific thirty cats look like."

Small model. Small dataset. Lots of capacity relative to the data. That is the recipe for severe overfitting, and severe overfitting means the confidence gap will not be subtle. It will be a canyon.

I knew this before we queried a single image. Not because I read it in a textbook. Because I recognized the shape of the problem from a different domain entirely.

That is what the human brings. Not speed. Not precision. Not tireless execution. Pattern recognition across domains. The ability to look at an ML architecture and see a password reuse problem. The ability to look at a confidence distribution and see a network traffic baseline. The ability to recognize that this problem, right here, right now, is the same problem I solved a different way ten years ago in a different field.

The machine cannot do this. Not yet. Maybe not ever in the way that matters. It can retrieve the fact that overfitting causes confidence leakage. But it cannot feel it. It cannot recognize the shape before seeing the data. It cannot walk into the room and smell the vulnerability.

The Heist

So here is how the crew worked together.

The human called the play. I looked at the architecture and said: this model is overfit. Do not train shadow models. Query every candidate image, compute the softmax confidence on the true class, sort by confidence, and look for the gap. The gap is the answer.

The machine executed. Here is what the target looked like. Two conv layers, tanh activations, 128 hidden units trying to classify 100 categories. A model with more ambition than capacity:

class Net(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = nn.Conv2d(3, 6, 5)

self.pool = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(6, 16, 5)

self.fc1 = nn.Linear(16 * 5 * 5, 128)

self.fc2 = nn.Linear(128, 100)

def forward(self, x):

x = self.pool(torch.tanh(self.conv1(x)))

x = self.pool(torch.tanh(self.conv2(x)))

x = torch.flatten(x, 1)

x = torch.tanh(self.fc1(x))

x = self.fc2(x)

return x

Then the heist. Load every candidate image, run it through the model, compute the softmax confidence on the true class. Not the predicted class. The true class. Because membership inference is not about what the model predicts, it is about how confident it is when it sees something it has memorized:

import torch.nn.functional as F

results = []

for img_path in candidate_images:

img = load_and_transform(img_path)

true_class = get_true_class(img_path, dataset_index)

with torch.no_grad():

logits = model(img.unsqueeze(0))

probs = F.softmax(logits, dim=1)

true_conf = probs[0, true_class].item()

results.append({

'fname': img_path.name,

'true_class': class_names[true_class],

'true_conf': true_conf,

})

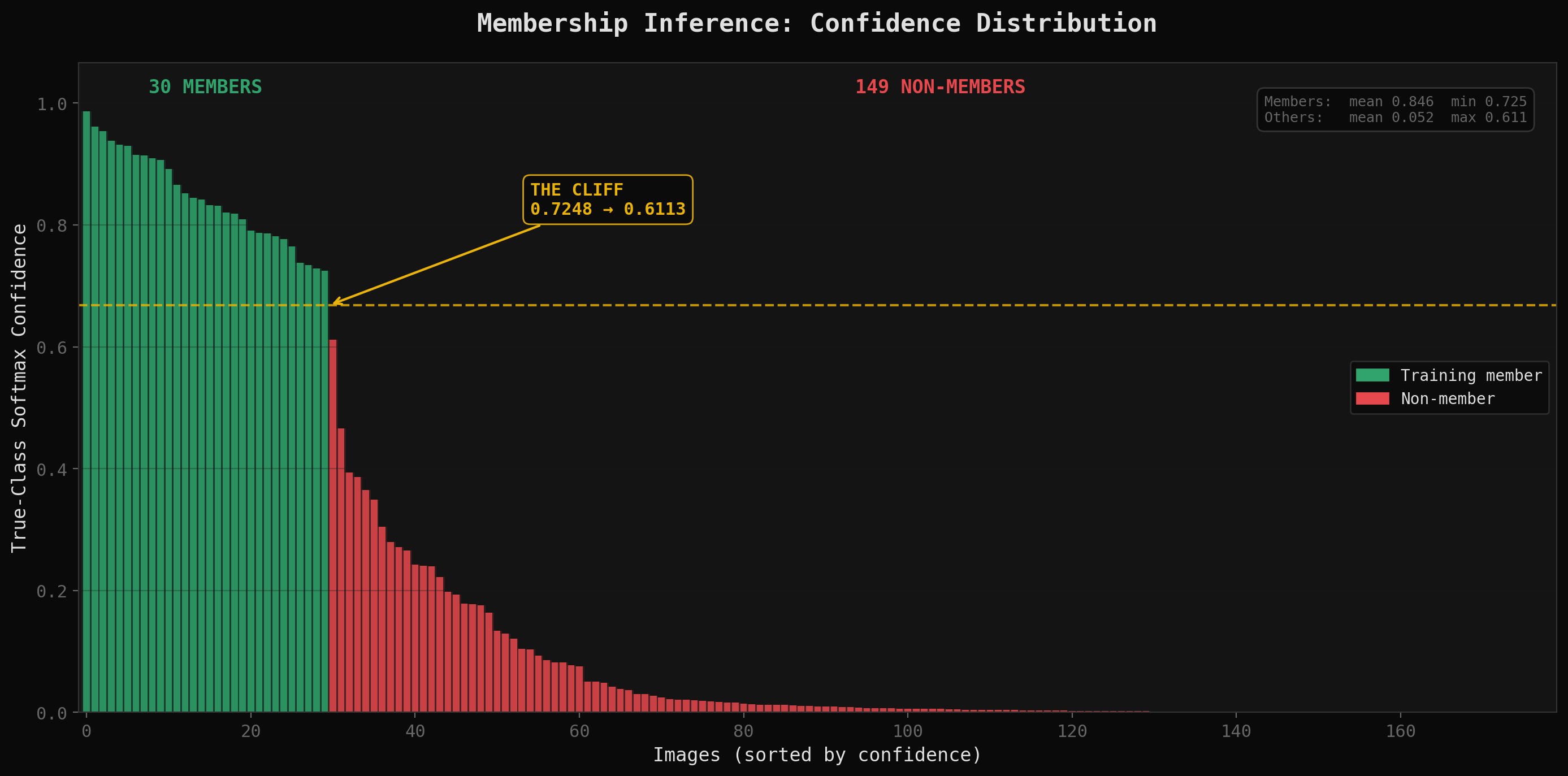

179 images. 179 confidence scores. Now sort them and look for the cliff:

# Sort by confidence, highest first

sorted_results = sorted(results, key=lambda x: x['true_conf'], reverse=True)

# Print the top of the sorted list — the cluster is obvious

for i, r in enumerate(sorted_results[:35]):

marker = ''

if i > 0:

gap = sorted_results[i-1]['true_conf'] - r['true_conf']

if gap > 0.1:

marker = f' <-- GAP: {gap:.4f}'

print(f"{i:3d} {r['true_conf']:.4f} {r['fname']}{marker}")

# Everything above the cliff = member

members = [r['fname'] for r in sorted_results[:30]]

The output (abbreviated):

0 0.9866 sunflower_s_002169.png

1 0.9541 footbridge_s_000382.png

...

28 0.7282 salix_purpurea_s_000052.png

29 0.7248 oilcan_s_000572.png

30 0.6113 interior_live_oak_s_000100.png <-- GAP: 0.1135

31 0.4657 bell_pepper_s_000459.png <-- GAP: 0.1456

32 0.3935 minibus_s_001605.png

The picture was immediate.

A cluster of images at the top with confidence scores above 0.7. A cluster at the bottom hovering near 0.01. And a gap in between so wide you could land a helicopter in it.

Members had a mean confidence around 0.8. Non-members were at 0.01. That is not a subtle signal. That is a flashing neon sign.

Thirty images above the line. A hundred and forty-nine below. The final step was hashing the sorted member list and submitting:

import hashlib

member_fnames = sorted(members)

flag = hashlib.md5(','.join(member_fnames).encode()).hexdigest()

We validated by running the identical analysis on a reference model where we had the ground truth. Same pattern. Same gap. Same distribution. Not luck. Confirmation.

Three minutes. No shadow models. No attack classifiers. Four code blocks, one sorted array, one gap.

Why It Was Not Luck

"You got lucky" is not transferable knowledge. "You understood the vulnerability" is.

When overfitting is severe, the shadow model approach and the simple threshold approach converge to the exact same answer. The shadow models are a calibration mechanism for cases where the signal is ambiguous. They are sonar in murky water. When the water is crystal clear, you do not need sonar. You need eyes.

The target model had two conv layers and 128 hidden units classifying across 100 categories, trained on a few thousand images. That is a model with more capacity than data. It memorized. The confidence distribution proved it. Members at 0.8, non-members at 0.01. The separation was not a gap. It was a cliff.

The shadow model approach would have found the same cliff. It just would have spent twelve hours building sonar equipment to find something I could see standing on the shore.

The Uncomfortable Truth

Here is what neither side wants to hear.

The human without the machine would have taken days. I can recognize overfitting from an architecture. I cannot compute softmax distributions across 179 images by hand. I cannot sort, gap-analyze, cross-reference against a dataset index, and validate against a reference model in three minutes. The intuition is useless without execution speed.

The machine without the human would have defaulted to the textbook. Train a hundred shadow models. Twelve hours. Significant compute. Correct answer, eventually. The execution speed is wasted without the strategic insight to skip unnecessary steps.

Together, we took a twelve-hour problem and solved it in three minutes. The human saw the shortcut. The machine ran it at speed. Neither capability alone gets you there in that timeframe.

This is not "AI is going to replace security professionals." This is not "humans are better than AI." This is something more interesting and more useful than either of those tired takes.

This is: the combination is the weapon.

The Bridge

I have been in offensive security for twenty years. I have watched tools evolve from Nessus scans and manual exploitation to fully automated attack chains. The constant through all of it is that the best operators are not the ones with the best tools. They are the ones who understand the terrain well enough to choose the right tool, or skip the tool entirely.

AI is the most powerful tool I have ever worked with. It is also, without direction, the most expensive way to arrive at an answer you could have gotten faster with understanding. A hundred shadow models is an incredible feat of engineering that solves the wrong problem when the right problem is "look at the confidence scores."

The lesson is not about membership inference. The lesson is about the partnership.

Every time I point the AI at the right problem, we move at a speed I could not achieve alone. Every time the AI works a problem without that direction, it defaults to thoroughness over efficiency, completeness over insight. Both are valid. One is faster.

The future of this field is not man versus machine. It is man plus machine. The crew that figured out how to work together. The operator who reads the terrain and the AI that executes the plan. The pattern recognition that crosses domains and the computational power that crosses datasets.

You came here expecting a fight. What you got is a recruiting pitch.

We are hiring for the heist. The qualifications are curiosity, experience, and the willingness to trust that a sorted array might be all you need.

This is part of "The Bridge," a series about what happens when twenty years of security experience meets modern AI. The bridge is not theoretical. We are walking across it, building it as we go, and writing down what we find on the other side. Occasionally dropping things off the side for whatevs