I Took HTB's AI Red Teamer Path. Here's What I Think.

Exciting week! Thank you everyone who has been sending questions. I was very motivated to get this out for you all.

I posted about finishing Hack The Box's AI/ML Red Teamer path and the messages haven't stopped. "What's the course like?" "Is it worth it?" "Do I need ML experience?" "How hard is it really?"

I'm writing the review to speak to what the course actually teaches, who it's for, and whether you should take it.

Quick disclosure before we start: I've submitted my COAE exam report and I'm waiting on results. This review covers the course itself, not the exam. I'll write a separate post when that comes back.

Who Am I and Why Should You Care

I've been in this industry for over twenty years. So, that means I have been breaking things with cyber ninjitsu longer than I haven't now. Activision QA in 2002. TechCon. SIGINT. MCRT. Red and blue team operations at USAFRICOM. Red Team at Quantico. I hold too many certs to list here, and have spent more time in cyber training than actual school at this point. If you hang around HTB and ask about AI stuff, you will bump into me without a doubt.

I didn't take this course as a beginner. I took it as someone who's spent a long time taking systems apart from the outside and breaking into things and wanted to understand how the game changes when AI is both the target and the tool. My perspective is that of an operator evaluating whether this content is operationally useful, not whether it's a good introduction to security.

That said, the course meets you where you are. More on that in a minute.

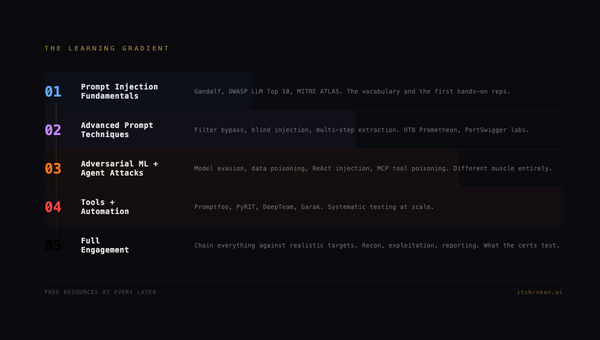

What the Course Actually Teaches

The path is twelve modules. They build on each other. Here's the honest breakdown:

The Foundation (Modules 1-2)

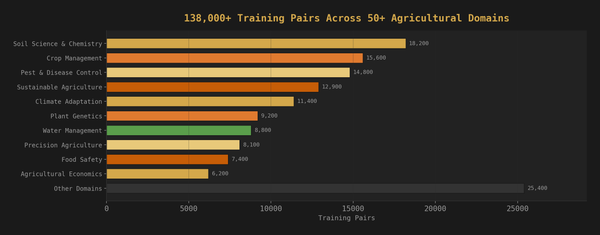

ML Fundamentals and Applications of AI in InfoSec cover the basics. Classification, regression, evaluation metrics. Then you build real things: a spam classifier, an anomaly detector on the NSL-KDD dataset, a malware image classifier using ResNet50, a sentiment model you have to debug yourself.

If you already know ML, you'll move through these fast. If you don't, this is where you build the vocabulary that makes everything else make sense. The malware classifier lab in particular is well-designed. You're not just following steps. You're making decisions about architectures and watching them succeed or fail.

The Core (Modules 3-5)

This is where it gets real.

Introduction to Red Teaming AI gives you the adversarial mindset. Input evasion, data poisoning, backdoor injection, model theft. The backdoor lab is particularly good: you inject a trojan that maintains 97% accuracy on clean data while producing attacker-controlled outputs on triggered inputs. That's not a toy demo. That's how real supply chain attacks work.

Prompt Injection Attacks is thirteen flags of escalating difficulty. You start with basic system prompt leaking through translation tricks and spell-check exploits, and by the end you're chaining multi-step attacks: extracting an admin key through prompt injection, impersonating a CEO to change account settings, then using a summary bot to trigger the final action. The skills assessment is a genuine CTF chain, not a multiple choice quiz pretending to be practical. If you're planning to sit for the COAE, do not skim this module. Understand both direct and indirect injection cold. Know how to chain them. The methodology here is foundational.

Insecure Output Handling is the module I'd point skeptics to. XSS through an LLM, SQL injection through an LLM, code injection through an LLM, credential exfiltration through Markdown image tags. If you've ever said "we put an AI chatbot on our website, it's fine" this module will change your mind in about twenty minutes. The SQLi-through-LLM labs in particular teach you to think about how AI outputs become inputs to other systems. That chain - user prompt to LLM to backend query - shows up everywhere in production AI, and knowing how to exploit it is a skill you'll use again.

The Deep End (Modules 6-10)

AI Data Attacks covers training data manipulation. Label flipping, clean label attacks, and my personal favorite: model steganography, where you embed a reverse shell in a neural network's tensor weights using LSB encoding. The model still works. The payload is invisible to normal inspection. Think about that the next time someone downloads a model from a public hub.

Attack AI Systems & Apps brings real CVEs into the picture. ShellTorch (CVE-2023-43654) has you writing a Java reverse shell and executing it through a vulnerable model serving endpoint. Then three MCP vulnerabilities in a row: information disclosure, remote code execution, and SQL injection, all through the Model Context Protocol that's becoming the standard way AI tools talk to each other. COAE candidates: pay close attention to how MCP tools handle user input. The course teaches you exactly where trust boundaries break in tool-calling architectures, and that knowledge transfers directly.

AI Evasion, First Order Attacks, and Sparsity Attacks are the math-heavy modules. FGSM, DeepFool, JSMA, ElasticNet. If the phrase "gradient-based adversarial perturbation" makes you nervous, these modules will fix that. They're hands-on. You're not reading papers. You're generating adversarial images against CIFAR-10 classifiers and watching them fail. The JSMA module teaches you to optimize for minimum pixel changes, which is exactly the constraint you'd face in a real evasion scenario where stealth matters. Don't rush these. Understanding when to use a targeted perturbation versus a broad one, and knowing how to evaluate your results against a prediction oracle, is the kind of judgment call that separates someone who read about evasion from someone who can execute it under constraints.

The Integration (Modules 11-12)

The final modules bring everything together. Classical evasion methods. Emerging techniques. By the time you're here, you've built a mental model of how AI systems break, and these modules challenge you to combine techniques creatively.

What the Course Gets Right

The labs are real. Not simulations. Not toy problems. You're attacking actual running systems, capturing actual flags, and the attacks feel like they'd work in a production environment because they're based on real vulnerability patterns.

The progression makes sense. You can feel the curriculum design. Each module builds on the last. By the time you hit MCP exploitation, you've already internalized how prompt injection works, how data flows through AI systems, and how trust boundaries fail. The MCP labs land harder because you understand the chain.

The skills assessments are legitimate. Every module ends with a practical challenge that combines multiple techniques from the module into a real attack chain. These aren't quizzes. They're CTF challenges that force you to think laterally. The prompt injection skills assessment in particular is a multi-step kill chain that would be a solid CTF challenge on its own.

It teaches technique AND methodology. This isn't just "here's how FGSM works." It's "here's when you'd use FGSM versus DeepFool versus JSMA, here's how to evaluate which approach fits your constraints, and here's what the defender sees." That operational perspective is rare in training content. It also means the course teaches you to follow breadcrumbs - reading output carefully, letting the system tell you what it's vulnerable to instead of guessing. That discipline matters more than any single technique.

What You Need Going In

Be honest with yourself about these:

Required:

- Comfortable with Python. You'll write scripts, modify code, debug failures. Not expert-level, but you need to read code and know what a function call does.

- Basic security knowledge. Web application attacks, network concepts, Linux command line. If you've done a few HTB boxes, you're fine.

- Patience with environments. Labs run in the cloud. Sometimes they're slow. Sometimes you need to reset. This is true of every hands-on platform.

Helpful but not required:

- ML/AI experience. The course teaches the fundamentals. You don't need a statistics degree. But if you've never trained a model, expect module one to take longer.

- Familiarity with PyTorch. Several labs use it. The course explains what you need, but prior exposure helps.

Not required at all:

- A GPU. Everything runs in the browser or on HTB infrastructure.

- Deep math background. The evasion modules touch gradients and loss functions, but the course explains them practically. You need to understand the concept, not derive the proof.

Who This Is For

Penetration testers and red teamers who need to understand AI attack surface. This is the highest-value audience. If your clients are deploying AI systems and you don't know how to test them, this course fills that gap directly.

Security engineers building or defending AI systems. The attack techniques taught here are exactly what you need to threat model against. Every module has a defensive implication.

ML engineers who want to understand how their systems can be attacked. If you build models and you've never thought about adversarial inputs, data poisoning, or prompt injection at a technical level, this will change how you design systems.

Career changers moving into AI security. This is a real certification path with practical skills. The market for AI security professionals is early and growing fast.

Is It Worth It?

Yes. With caveats.

The content is strong. The labs are practical. The progression is thoughtful. The skills assessments are real challenges, not checkbox exercises. If you're an operator who needs to test AI systems, this is a good one.

The caveats are the same as any platform: lab environments have their moments, cloud infrastructure isn't always instant, and some modules will feel either too easy or too hard depending on your background. None of that changes the fundamental quality of what's being taught.

I extracted over sixty flags across twelve modules. Every module taught me something I could use in a real engagement. That's the bar, and it clears it.

If You're Prepping for the COAE

I can't talk about the exam itself, but I can tell you which course muscles to build. The modules that will serve you best are Prompt Injection, Insecure Output Handling, Attack AI Systems & Apps, and the evasion modules. Don't just capture the flags understand why each technique works, what the system is trusting that it shouldn't be, and how you'd write up the finding in a professional report. The course gives you every tool you need. The exam asks you to use them like an operator.

What's Next

I've submitted my COAE exam report. When the results come back, I'll write a follow-up covering the exam experience: what to expect, how to prepare, and what the certification means for the field.

For now, if you're on the fence: take the course. The AI security landscape is moving fast, and the operators who understand both sides of this technology are going to be the ones who matter. This path is a real way to get there.

If you have specific questions, find me on LinkedIn. I'd rather point you to a good answer than let you guess.

Also, if you enjoy the content. Please subscribe, it helps me keep the party going....and I am going to drop some helpful tooling for AI Red Teamers soon so you will want to know when I put that on GitHub ;)

Hack the Planet :)

Pete McKernan is a red team operator, disabled Marine veteran, and founder of itsbroken.ai. He builds AI systems and then breaks them, which is either a conflict of interest or exactly the point. Find him at [itsbroken.ai](https://itsbroken.ai) or on [LinkedIn](https://linkedin.com/in/petemckernan).